AI systems today depend on vast amounts of accurately labeled data to learn patterns, make predictions, and adapt to real-world tasks. Without structured, annotated data with meaningful context, even the most advanced models struggle to perform reliably. Scale AI exists to solve this foundational challenge by providing data labeling, annotation services, and related infrastructure that help companies train and refine artificial intelligence systems at scale. Its platform combines automation with human review to ensure data quality, a critical factor for effective machine learning.

This article explains what Scale AI is, its role in the AI ecosystem, and why its approach to data labeling and human-in-the-loop workflows has become important for organizations building serious AI systems. The focus is on practical understanding rather than marketing claims, with the goal of clarifying where Scale AI fits and who actually benefits from its services. I’ll approach the topic from the perspective of real-world AI development needs.

What Is Scale AI

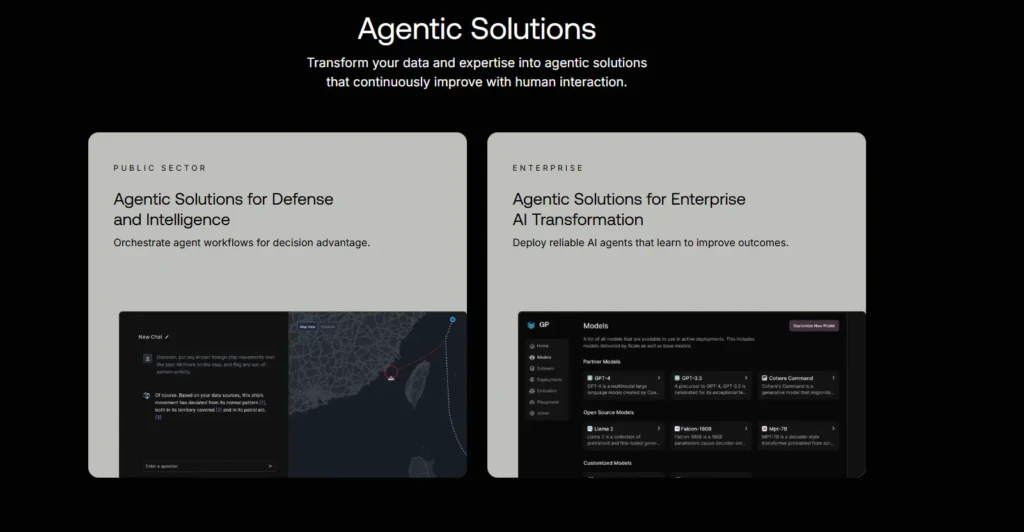

Scale AI is a data-centric artificial intelligence company focused on providing high-quality training data and related infrastructure for machine learning models. In essence, it bridges a critical gap between raw unstructured data and the curated, structured datasets required to train AI systems effectively. Its offerings are used by companies developing autonomous systems, natural language processing models, computer vision tools, and other AI applications that depend on accurately labeled data.

Unlike generic data storage or cloud services, Scale AI specializes in transforming raw data, such as images, text, sensor feeds, and video, into formats that machine learning algorithms can meaningfully process. This role has made it a strategic partner for many organizations prioritizing AI R&D and deployment.

What Scale AI Does

At a fundamental level, Scale AI provides data annotation, labeling, and validation services that help machine learning models learn from real examples instead of just raw text or images. Without labeling, models lack the structured context they need to accurately identify concepts like objects in images or intent in language.

Scale AI’s platform and services include:

- Image and sensor data labeling for systems such as autonomous vehicles and robotics

- Text annotation and categorization for natural language processing tasks

- Human-in-the-loop review workflows that combine machine speed with human judgment

- Quality control tools that ensure consistency and accuracy across large datasets

These services help ensure that machine learning models are built on clean, comprehensive, and vetted datasets, which directly influences performance and reliability.

How Scale AI Works

Scale AI’s workflow typically starts with clients uploading or integrating raw unstructured data through an application programming interface (API) or platform interface. This data then enters a labeling pipeline that may involve:

- Automated pre-labeling by machine learning tools to accelerate basic tagging

- Human review and correction to ensure high accuracy, especially for edge cases

- Iterative feedback loops where labeled results are refined over time

This hybrid model, combining AI with human verification, balances speed with precision, particularly in complex scenarios where purely automated labeling may fall short. The result is a dataset that machine learning models can use more effectively during training and evaluation phases.

Scale AI Use Cases

Scale AI’s services span a range of industries, but some of the most prominent use cases include:

- Autonomous Vehicles: Labeling sensor and image data to train perception systems for cars and drones.

- Computer Vision Applications: Helping models recognize objects, surfaces, and scenes in images and video.

- Natural Language Understanding: Annotating text to improve language models’ comprehension and classification abilities.

- Enterprise Search and Recommendation Systems: Structuring diverse data so search tools yield more relevant, contextually accurate results.

These use cases reflect the broad applicability of high-quality labeled data across machine learning and AI projects.

Why Scale AI Matters

The quality of training data is widely recognized as one of the most significant determinants of machine learning model performance. According to research, up to 80–90% of the effort in building effective AI systems is tied to data preparation and labeling, rather than model architecture or algorithm selection.

Therefore, platforms like Scale AI are critical because they help organizations overcome bottlenecks associated with:

- Insufficient labeling resources

- Inconsistent quality across large datasets

- Complex data types, such as lidar or multi-modal sensor feeds

By centralizing and standardizing data labeling workflows, Scale AI enables teams to accelerate AI development timelines and focus more on innovation and deployment.

Scale AI vs Other Data Labeling Platforms

Given the importance of labeling, many companies offer annotation tools and services; however, Scale AI differentiates itself through:

- Hybrid human-machine workflows that mix automation with expert review

- Enterprise-scale orchestration, capable of handling massive datasets

- API-based integration that fits into existing AI development pipelines

In comparison, smaller annotation tools may be easier to set up but lack the scalability and quality-control mechanisms required for mission-critical AI systems.

Feature | Scale AI | General Annotation Tools |

Human-in-the-Loop Workflows | Yes | Sometimes |

API Integration | Enterprise-grade | Varies |

Automated Pre-labeling | Yes | Limited |

Quality Evaluation Tools | Yes | Limited |

This table highlights the practical differences that matter when teams need reliable annotation at scale.

Who Uses Scale AI

Scale AI primarily serves business-to-business (B2B) customers developing machine learning applications. These include:

- Large tech companies building autonomous systems and foundation models

- Enterprises integrating AI into operational workflows

- Research institutions requiring high-quality datasets

- Government and defense organizations using AI in specialized domains

This range of users reflects the essential role of data in powering a wide spectrum of AI technologies.

Limitations and Criticisms

Despite its significance, Scale AI’s model also faces challenges:

- Cost and Scale Requirements: High-quality annotation at enterprise levels can be expensive for smaller teams.

- Dependency on Human Labor: Even with automation, human review remains central, introducing variability in speed and output.

- Security Concerns: Reports have highlighted risks related to how sensitive training data and project files are managed.

These considerations underscore the importance of assessing organizational needs and constraints before committing to large-scale data annotation services.

Is Scale AI Only for Large Companies

While Scale AI’s platform excels in large-scale deployments, smaller organizations and teams with limited budgets may find its services more than they require. For those cases, alternatives include specialized open-source annotation tools, outsourced annotation providers, or internally managed labeling processes. The decision often hinges on project size, required accuracy, and integration needs.

Conclusion

Scale AI occupies a critical position in modern AI development by addressing one of the most difficult and time-consuming parts of building machine learning systems: producing high-quality training data at scale. Its combination of automation, human review, and enterprise-level tooling makes it particularly valuable for organizations working on complex models where accuracy, consistency, and reliability directly impact outcomes. At the same time, its services are best suited for teams with substantial data volumes and clearly defined AI objectives, rather than smaller or experimental projects.

Viewed in context, Scale AI complements other parts of the AI toolchain rather than replacing them. Language models such as those discussed in the Chat GPT 4 guide, developer-focused tools covered in this GitHub Copilot explained article, and broader model comparisons like DeepSeek vs ChatGPT all address different stages of the AI lifecycle. Looking at these tools together has reinforced my view that Scale AI’s real value lies in strengthening the data foundation on which everything else depends, and I see it as infrastructure that becomes increasingly important as AI systems move from experimentation to production.

FAQs About Scale AI

Not strictly. While data labeling is core, its tools also support evaluation, alignment, and hybrid workflows for AI development.

No. Its focus is on data and infrastructure that enable AI models to be trained more effectively.

It can be used by small teams, but enterprise-oriented pricing and scale may make it more cost-effective for larger projects.

At Your Tech Compass, we publish detailed tech guides, reviews, and comparisons to help users choose the right devices and tools.