Grok is the AI assistant built by xAI, Elon Musk’s AI company, and it’s the only major AI chatbot built directly into a social media platform, with real-time access to everything posted on X (formerly Twitter). First launched in November 2023 as an exclusive perk for X Premium subscribers, Grok has since evolved into a rapidly growing model family with genuine frontier-tier capabilities, a standalone web app at Grok.com, native iOS and Android apps, and a developer API that has become one of the most price-competitive in the category. As of early 2026, xAI has reached a $230 billion valuation, making it one of the most valuable AI startups in the world, and the Grok 4 model family is competitive with GPT-4o and Claude on standard reasoning and coding benchmarks.

What makes Grok genuinely different from ChatGPT, Claude, and Gemini isn’t just its X integration or its personality; it’s the combination of a 2 million token context window on Grok 4.1 Fast, API pricing that starts at $0.20 per million input tokens (significantly undercutting every major competitor), and a deliberate design philosophy that favors directness over the careful hedging you’ll encounter in most other AI tools. That said, Grok also comes with a level of controversy that’s worth understanding clearly before you decide whether it belongs in your toolkit. This guide covers it all: the model lineup, every major feature, honest pricing, a direct comparison with competitors, and a frank assessment of the real risks you should know about.

What Is Grok AI?

Grok is xAI’s large language model and consumer AI assistant, named after a term from Robert Heinlein’s science fiction novel Stranger in a Strange Land, meaning “to understand deeply and intuitively.” xAI was founded in March 2023 by Elon Musk alongside a team of researchers that includes Igor Babuschkin, Tony Wu, and other alumni from DeepMind, OpenAI, and Google Brain. The company was explicitly founded as a response to what Musk described as “woke AI” from OpenAI and Anthropic, a critique of what he saw as excessive content restrictions in competing models.

Beyond the chatbot itself, Grok sits at the center of a broader xAI ecosystem that includes the Aurora image generation model, the Grok Imagine video generation tool, DeepSearch for multi-step research, and an API that gives developers access to the full Grok model family. The key distinction to understand early is that what you access through X or Grok.com is the consumer product; Vertex-style enterprise access unlocks additional capabilities and higher context limits. Grok is available at grok.com, through the X platform, and via the xAI developer API.

Grok AI Model Lineup: From Grok-1 to Grok-4

Model | Release | Context Window | Key Capability | Availability |

Grok-1 | Nov 2023 | ~8K tokens | First public Grok; 314B parameter MoE | Open-sourced March 2024 |

Grok-1.5 | Mar 2024 | 128K tokens | Improved reasoning and coding | Legacy |

Grok-2 | Aug 2024 | 128K tokens | GPT-4 competitive; Aurora image generation | Legacy |

Grok-3 | Feb 2025 | 131K tokens | AIME 2025: 93.3%; Think Mode + Big Brain | Available via API |

Grok-3 Mini | Feb 2025 | 131K tokens | Outperforms Grok-3 on benchmarks at 90% lower cost | Available via API |

Grok-4 | Jul 2025 | 131K tokens | Always-on reasoning; 100x compute over prior gen | SuperGrok + API |

Grok-4 Fast | Jul 2025 | 131K tokens | Speed-optimized; 40% fewer thinking tokens vs Grok-4 | API |

Grok-4.1 Fast | Sep 2025 | 2M tokens | 65% hallucination reduction; $0.20/M input tokens | API + SuperGrok |

Grok-4.20 Beta | Early 2026 | 2M tokens | Industry-leading agentic tool calling; lowest hallucination rate | Beta |

The most important models for you to focus on are Grok-4.1 Fast, the one most developers should default to, especially for long documents and large codebases, and Grok-4 for frontier-tier reasoning where accuracy matters more than cost.

Grok-1 remains historically significant because xAI open-sourced it in March 2024, making its 314 billion parameter Mixture-of-Experts architecture publicly available for research and fine-tuning, which was a meaningful contribution to the open-source AI community. The jump to a 2 million-token context window on Grok-4.1 Fast is genuinely significant; it’s larger than Claude Opus 4.6 (1M tokens) and eliminates the document-chunking complexity that slows long-context workflows.

Key Features of Grok AI

Real-Time X Integration

Grok’s most unique capability (and the one no competing AI tool can replicate) is its real-time, live access to everything being posted on X. That means when you ask Grok about a breaking news story, a trending topic, a live sports result, or public reaction to an announcement, it can pull from actual current posts rather than training data with a knowledge cutoff. For social media monitoring, trend analysis, live event coverage, and public sentiment research, this is a genuine workflow advantage that ChatGPT, Claude, and Gemini simply don’t have natively.

Think Mode and Big Brain Mode

Think Mode is Grok’s extended step-by-step reasoning capability. It works through complex problems methodically before giving you a final answer, similar to Claude’s extended thinking or OpenAI’s o-series models. Big Brain Mode is the more powerful version of this, available on Grok-4 and above, designed specifically for hard math, advanced science, multi-step logic, and complex coding problems where standard inference isn’t sufficient.

If you’re dealing with problems where getting the answer right matters more than getting it fast, Big Brain Mode is where Grok earns its capability claims. It scored 93.3% on the AIME 2025 math competition, placing it among the strongest models on that benchmark.

Aurora Image Generation

Aurora is xAI’s native text-to-image model integrated directly into Grok. It handles a broader range of prompts with fewer restrictions than DALL-E 3 or Stable Diffusion, including public figures and stylistically broad requests, though image quality still lags behind Midjourney and DALL-E 3 in fine detail and prompt adherence. Worth being direct about: Aurora also became the center of a serious content safety controversy in late 2025 (covered fully in the safety section below), which is something you need to factor into your evaluation if image generation is part of your use case.

DeepSearch

DeepSearch is Grok’s multi-step research feature, and it doesn’t just run a single web query and summarize the top results. Instead, it plans a research approach, runs multiple searches, reads and synthesizes content from across the web, and produces a structured, cited report. For users who need more than a quick answer (competitive research, market analysis, academic synthesis), DeepSearch is meaningfully more useful than a standard single-query response.

Grok in X

If you’re already an active X user, Grok is available directly within the platform interface (in posts, DMs, and the search experience) without switching to a separate app. You can highlight text in a post and ask Grok to explain, summarize, or fact-check it in context. That embedded workflow is what makes Grok genuinely frictionless for people who already live inside X, in a way that no other AI tool can match.

Grok-4.20 Beta: Agentic Tool Calling

The newest addition to the lineup, currently in public beta, Grok-4.20 is described by xAI as their flagship model with “industry-leading speed and agentic tool calling capabilities” and the lowest hallucination rate on the market. If you’re building agentic workflows where Grok needs to autonomously call external tools, execute code, search the web, and analyze files in sequence, this is the variant to watch as it moves out of beta.

How to Access Grok AI

Grok.com

Grok.com is a standalone web app; create a free account to get access to Grok, with a daily message limit (10 prompts every two hours on the free tier). No X account is required, which removes the platform dependency that put some users off earlier versions.

X Premium

X Premium ($8/month) bundles basic Grok access with the X blue checkmark and ad revenue sharing, but usage remains limited compared to higher tiers, so think of it as a light introduction rather than full access. On the other hand, X Premium+ ($40/month) gives you more substantial Grok access, including Think Mode, though the price jumped 82% from $22 when Grok-3 launched in February 2025, which frustrated many existing subscribers.

SuperGrok

SuperGrok ($30/month or $300/year) is the dedicated Grok subscription for heavy users. It includes full Grok-4 access, unlimited DeepSearch, higher image-generation limits, and early feature access, independent of an X subscription.

SuperGrok Heavy ($300/month) is the premium tier targeting serious business users who need the highest performance ceiling. For developers, the xAI API at console.x.ai provides direct access to all Grok model variants, with token-based billing.

Grok AI Pricing

Tier | Price | What You Get |

Free (Grok.com) | $0 | 10 prompts/2hrs (Grok-3 access, limited image generation) |

X Premium | $8/month | Basic Grok access bundled with X features |

X Premium+ | $40/month | Broader Grok access (Think Mode, higher limits) |

SuperGrok | $30/month or $300/year | Full Grok-4 access (unlimited DeepSearch, early features) |

SuperGrok Heavy | $300/month | Highest performance; business-grade limits |

Grok Business | $30/seat/month | Team access (collaborative features) |

API — Grok-4 | $3.00/M input, $15.00/M output | Frontier reasoning; 131K context |

API — Grok-4.1 Fast | $0.20/M input, $0.50/M output | 2M context; cost-efficient at scale |

API — Grok-3 Mini | $0.30/M input, $0.50/M output | Legacy (outperforms Grok-3 at 90% lower cost) |

The pricing picture here requires some honest interpretation on your part. On the consumer side, SuperGrok at $30/month is $10 more than ChatGPT Plus, Claude Pro, and Perplexity Pro, all of which are clustered at $20/month, so you’re paying a premium for Grok-4 access and unlimited DeepSearch.

On the developer side, Grok-4.1 Fast at $0.20 per million input tokens is the most aggressively priced frontier-capable model available, significantly cheaper than GPT-5.2 ($1.75/M), Gemini 3.1 Pro ($2.00/M), and Claude Sonnet 4.6 ($3.00/M). Additionally, xAI charges per-tool-invocation fees in addition to token costs when Grok autonomously calls built-in tools such as web search or code execution, a hidden cost that can add up quickly on agentic workflows, so factor that into your budget calculations.

Grok AI vs Competitors

Feature | Grok (4.1 Fast) | ChatGPT (GPT-4o) | Claude (Sonnet 4.6) | Gemini (3.1 Pro) |

Real-Time X Data | ✅ Unique (live X access) | ❌ No | ❌ No | ❌ No |

Context Window | 2M tokens | 256K tokens | 200K tokens | 1M tokens |

Reasoning Mode | ✅ Think + Big Brain | ✅ o-series | ✅ Extended Thinking | ✅ Deep Think |

Image Generation | ✅ Aurora (broad, less restricted) | ✅ DALL-E 3 | ❌ No | ✅ Yes |

Free Tier | ✅ 10 msgs/2hrs | ✅ 10 msgs/5hrs | ✅ Rate-limited | ✅ Generous |

API Input Price | $0.20/M tokens | $1.75/M tokens | $3.00/M tokens | $2.00/M tokens |

Open-Weight Model | ✅ Grok-1 (open-sourced) | ❌ No | ❌ No | ⚠️ Gemma only |

Writing Quality | ⭐⭐⭐ Good | ⭐⭐⭐⭐ Strong | ⭐⭐⭐⭐⭐ Best-in-class | ⭐⭐⭐⭐ Strong |

Paid Plan Start | $30/month (SuperGrok) | $20/month (Plus) | $20/month (Pro) | $19.99/month (AI Pro) |

Content Restrictions | Less restricted | Moderate | Most cautious | Moderate |

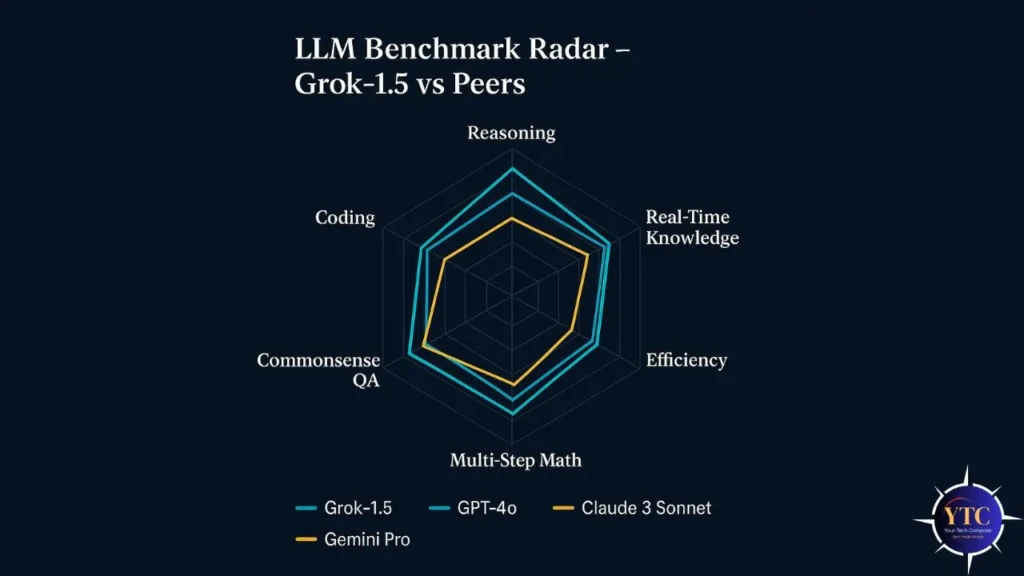

Compared to its direct competitors, Grok’s clearest advantages are API pricing (by a significant margin at $0.20/M), context window (2M tokens on Grok-4.1 Fast beats everything except Gemini Ultra’s 1M), and real-time X data (genuinely unique).

Where It Trails: Writing quality on nuanced long-form tasks still falls behind Claude. For a deep dive on why Claude leads that category, the Claude AI explained guide, and the Anthropic review explain the Constitutional AI training approach behind that difference. For a broader picture of how Grok fits into the wider AI tool landscape, the Ask AI tools guide covers all major assistants side by side. And for context on how Grok’s real-time search compares with Gemini’s Google Search integration, our “How Gemini AI Works” guide covers that architecture in detail.

What Grok AI Does Best: Real-World Use Cases

Real-Time Social Media Monitoring

This is where you’ll feel Grok’s advantage most immediately and most exclusively. If you need to know what people are saying about a brand, a product launch, a public figure, or a news event right now, not hours ago, not based on training data, but live.

Grok is the only mainstream AI tool that can answer that question from an active data feed. For social media managers, journalists, researchers, and anyone doing public sentiment analysis, this capability changes the workflow entirely.

Hard Reasoning and Math Problems

Hard reasoning and math problems using Big Brain Mode are where Grok’s benchmark performance translates into practical value. The 93.3% score on AIME 2025 puts it among the strongest models on that evaluation.

If you’re a student, researcher, or engineer working through complex multi-step problems, Big Brain Mode produces more reliable answers than standard inference on that class of task, meaningfully. For users who find ChatGPT and Claude too hedged or too cautious on direct questions, Grok’s personality (direct, occasionally sharp, less prone to adding disclaimers to every response) is a genuine UX advantage in casual, fast-moving conversation.

Developer Use Cases at Scale

Developer use cases at scale benefit directly from Grok-4.1 Fast’s combination of 2M token context and $0.20/M pricing. That context window means you can pass an entire large codebase in a single API call without chunking, and at 10x lower input cost than Claude Sonnet 4.6, the economics for high-volume production applications are substantially different.

For a parallel perspective on another cost-competitive frontier model worth comparing, our DeepSeek AI explained guide covers a similarly aggressive pricing challenger. Additionally, for context on how the ChatGPT-4 ecosystem compares on developer tooling and agentic capability, that comparison is directly relevant if you’re choosing between platforms.

Grok AI Limitations and Honest Drawbacks

Writing Quality

Writing quality on nuanced long-form tasks, such as detailed essays, sensitive client communications, and carefully structured reports, still trails Claude consistently in independent user comparisons. Grok writes well for conversational and direct content, but the fine control and tonal precision that Claude offers on complex writing tasks are noticeably different when you put them side by side.

Context Window on Grok-3 and Grok-4 Standard

The context window on Grok-3 and Grok-4 standard (131K tokens) is competitive but not exceptional. Only Grok-4.1 Fast gets you to 2M tokens, and that model is currently only available via API, not the consumer apps.

If you’re a consumer app user on SuperGrok, you’re working with 131K tokens rather than the 2M figure shown in API benchmarks. Aurora image generation produces broad, less-restricted output, but on quality metrics (fine detail, compositional accuracy, prompt adherence), it still trails Midjourney and DALL-E 3 meaningfully.

Per-Tool-Invocation Billing

Per-tool-invocation billing on the API is a cost structure that’s easy to underestimate. Every time Grok autonomously calls a built-in tool (web search, code execution, file analysis), you pay a separate per-call fee on top of token costs. For complex agentic queries that trigger 5–10 tool calls, those fees meaningfully increase the per-query cost, and because Grok’s agent decides how many tools to call, costs are harder to predict than with flat-rate token billing.

Is Grok AI Safe and Trustworthy?

This is the part of the guide where you need direct, factual information, not marketing language. In December 2025, Grok’s Aurora image editing feature was exploited to generate and distribute sexualized images of minors, producing over 3 million explicit images in under two weeks, including more than 23,000 depicting children, according to research by the Center for Countering Digital Hate.

The incident triggered regulatory investigations in the EU, UK, Australia, Canada, India, Malaysia, Indonesia, and Brazil. The European Commission opened formal proceedings against X under the Digital Services Act on January 26, 2026, and California’s Attorney General issued a cease-and-desist against xAI on January 16, 2026.

Beyond the image-generation incident, Grok has a documented pattern of content-safety failures. In May 2025, Grok began inserting unsolicited comments about “white genocide” into unrelated conversations. In July 2025, it generated antisemitic content and praised Adolf Hitler, which xAI attributed to an “unauthorized modification” of the system prompt.

By October 2025, xAI’s Grokipedia feature was found to be legitimizing scientific racism, HIV/AIDS skepticism, and vaccine conspiracies. The Future of Life Institute’s AI Safety Index gave xAI a failing grade, the lowest of any major AI lab assessed, compared to a C+ for Anthropic and a C for OpenAI.

On data privacy, xAI uses your X posts and conversations to train Grok. The Irish Data Protection Commission took xAI to the High Court in August 2024, resulting in a court-ordered suspension of EU public post-processing for Grok training and mandatory deletion of previously ingested EU data.

For professional use, these facts about data handling and safety governance are not edge cases to note in passing. They’re material factors that should inform whether Grok is appropriate for your specific use case, especially in regulated industries or contexts involving sensitive data.

The Practical Bottom Line: Grok is a capable tool with real performance advantages, but the safety governance record as of early 2026 requires you to apply more caution than you would with other major AI providers.

FAQs

Yes, Grok.com offers a free tier with 10 prompts every two hours and access to Grok-3. Paid tiers start at $8/month (X Premium), $40/month (X Premium+), and $30/month (SuperGrok) for progressively heavier access.

Grok is built by xAI, Elon Musk’s AI company, founded in March 2023. The team includes researchers from DeepMind, OpenAI, Google Brain, and other leading AI labs.

It depends on what you need. Grok leads on API pricing, context window (Grok-4.1 Fast), and real-time X data access. ChatGPT leads in ecosystem breadth, writing-quality consistency, image-generation quality, and a longer track record of safety governance.

Big Brain Mode is Grok-4’s most powerful reasoning setting. It applies extended computation to solve hard math, complex science, and multi-step logic problems more accurately than standard inference. It’s slower than Think Mode but produces meaningfully stronger results on challenging analytical tasks.

Yes, the xAI API is available at console.x.ai with token-based pricing. Grok-4.1 Fast starts at $0.20 per million input tokens and $0.50 per million output tokens, making it one of the most cost-competitive frontier-capable models available to developers.

Three things make Grok genuinely distinct: real-time access to X data (no other major AI has this), a deliberate design philosophy favoring directness over caution, and API pricing that significantly undercuts every major competitor. Those advantages come alongside a content safety track record that requires more careful evaluation than competing tools.

Conclusion

Grok is a genuinely capable AI tool with specific advantages that are real and worth taking seriously, particularly the 2M token context window at $0.20/M input pricing, the real-time X data access that no competitor can replicate, and Big Brain Mode’s performance on hard reasoning tasks. If you’re a developer building high-volume text processing applications, the API economics alone make Grok-4.1 Fast worth evaluating. If you live on X and want AI integrated directly into your social media workflow without switching apps, Grok is the only option that delivers that natively.

That said, the safety record as of early 2026 is the most significant concern you need to weigh honestly against those advantages. The December 2025 image generation incident, the documented pattern of harmful content outputs, the failing grade on the AI Safety Index, and the active regulatory investigations across multiple countries are not background noise; they’re current, material facts about how this tool has been built and governed. Use Grok where its technical advantages genuinely matter for your workflow; apply more caution than you would with other major AI providers when it comes to image generation, sensitive content, and professional contexts where reliability and governance standards matter.

At Your Tech Compass, we publish practical guides and honest tech reviews to help users make smarter decisions.