Artificial intelligence is evolving at an unprecedented pace, and new models are constantly emerging to challenge the dominance of established players like ChatGPT, Claude, and Gemini. One of the most talked-about newcomers in this space is DeepSeek, an AI model that has gained attention for its strong reasoning abilities, large context handling, and, perhaps most notably, its focus on cost efficiency. Unlike many closed, heavily commercialized AI systems, DeepSeek takes a more open, research-oriented approach, making it especially popular among developers, researchers, and technically inclined users.

At the same time, DeepSeek is not without controversy. Questions around privacy, data handling, and long-term reliability have followed its rapid rise, making it important to look beyond the hype and examine what this AI actually offers, how it works, and where it fits in the broader AI ecosystem alongside tools like Claude, Perplexity, and Gemini. In this guide, we will break down everything you need to know in a clear, practical, and unbiased way so you can decide whether DeepSeek is worth using for your own needs. That is exactly what I will do here.

What Is DeepSeek?

At its core, DeepSeek is an artificial intelligence system built around advanced large language models (LLMs) and designed for tasks ranging from natural language understanding and generation to reasoning and code generation. Originating from a Chinese AI company with roots in Hangzhou and backed by research initiatives focused on cost-efficient neural architectures, DeepSeek grew rapidly into a recognizable AI brand after its models began competing with mainstream systems like ChatGPT. Unlike some models that remain closed or proprietary, DeepSeek has released versions of its models, including R1 and V3 variants, that emphasize openness and efficiency.

DeepSeek’s chatbot implementations have gained substantial public attention, with some versions briefly surpassing more established competitors in app store charts. However, its position has been politically and ethically complex, with heightened scrutiny over privacy and data-handling practices in multiple countries.

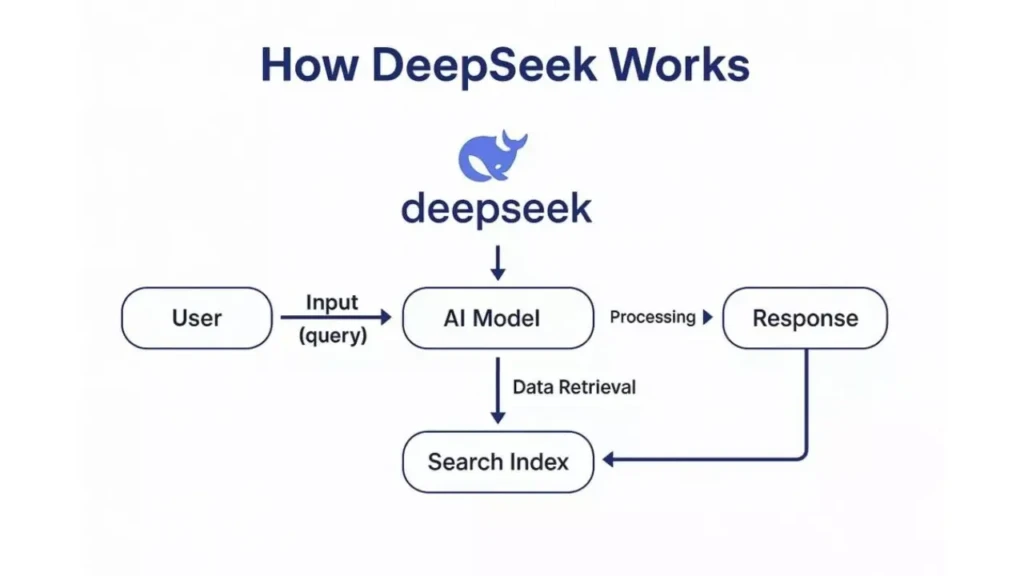

How DeepSeek Works

DeepSeek is built on neural architectures with a conceptual grounding similar to that of models like GPT and Gemini, but it incorporates innovations to reduce computational cost and improve reasoning efficiency. One key approach involves Mixture-of-Experts (MoE) designs, where only select “expert” sub-networks are activated for given inputs, reducing overall inference costs relative to models that activate all parameters.

Additionally, DeepSeek models often include specialized pathways for reasoning, mathematics, and handling long context. For example, models like DeepSeek-R1 and variants in the V3 series offer greater token context capacity, enabling them to handle very long conversations or documents more effectively than models focused on shorter prompts.

From a user’s perspective, these architectural choices translate into systems that attempt to balance reasoning depth, cost efficiency, and broad applicability, whether for code generation, logic problems, or natural language queries.

DeepSeek’s Key Features

DeepSeek’s architecture and tooling give it several distinctive capabilities:

Advanced Reasoning

DeepSeek models, particularly R1 and its successors, are designed to follow logical chains of thought and tackle complex, structured problems, including mathematical and logical ones, with an emphasis on deduction.

Large-Context Handling

With support for extended context lengths, up to tens of thousands of tokens in some implementations, DeepSeek can preserve more of a conversation or document history in a single pass than many legacy models.

Cost and Efficiency

Through MoE and optimized compute strategies, DeepSeek aims to operate at a significantly lower inference cost than many traditional LLMs, making it potentially more accessible for developers and businesses with limited budgets.

Multilingual and Mathematical Strength

DeepSeek’s training priorities include both natural language understanding across multiple languages and advanced mathematical reasoning, which can benefit academic and professional users alike.

What DeepSeek Is Used For

Like other generative AI models, DeepSeek’s capabilities extend across a variety of use cases:

- Natural Language Generation: Producing coherent and contextually detailed text for essays, reports, and creative content. If you’re looking to apply AI for social media content specifically, Social Media Post Generator tools show how AI is being embedded into everyday marketing workflows.”

- Coding Assistance: Aiding in code generation, debugging, and explanation, with some models designed specifically for developer workflows.

- Research and Data Extraction: Summarizing documents and extracting structured insights from large text corpora.

- Problem Solving and Reasoning: Performing logic-intensive tasks, mathematical solutions, and structured planning.

- Multimodal Tasks: In some implementations, processing text alongside visual or code inputs.

These applications mirror workflows commonly supported by other leading AI models, including the strengths and tradeoffs observed in systems like Claude (which prioritizes contextual depth) and Perplexity AI (noted for research and citation-oriented responses). Linking these models contextually helps stakeholders choose the platform that best fits their specific needs.

For a practical overview of what to look for when choosing AI tools for business use, see this guide on MozPK.

DeepSeek vs Other AI Models

To understand where DeepSeek stands relative to other prominent AI systems:

Feature / Capacity | DeepSeek | Claude | Gemini | ChatGPT |

Reasoning Efficiency | Strong | Very Strong | Strong | Strong |

Cost Efficiency | High | Moderate | Moderate | Lower (premium tiers) |

Long Context Handling | High | High | Very High | High |

Multimodal Support | Present (select models) | Present | Advanced | Advanced |

Openness (weights, APIs) | High / Open | Moderate | Moderate | Low to moderate |

Privacy / Legal Issues | Active scrutiny | Stable | Stable | Stable |

DeepSeek’s open-weight philosophy and emphasis on efficient reasoning set it apart from many closed, proprietary models. At the same time, models such as Claude and Gemini often excel in alignment and safety, which are important in enterprise and professional environments. For an in-depth look at alternative models with strong context and safety features, see our articles on Claude AI Explained and How Gemini AI Works.

Pros and Cons of DeepSeek

The Pros

- Cost-Effective Inference: Designed to reduce computational cost relative to many proprietary models.

- Open Access: Some models and weights are available under open licenses, which encourage research and integration.

- Strong Context Management: Large maximum token windows improve performance on long documents.

The Cons

- Privacy and Security Concerns: DeepSeek has faced regulatory scrutiny across multiple countries over its data storage and privacy practices.

- Hallucination Risks: Like many generative models, it can produce misleading or incorrect information without clear disclaimers.

- Operational Stability: Some users report availability and reliability issues with free services, especially during peak demand.

These pros and cons illustrate that while DeepSeek offers attractive innovation and accessibility, it also highlights why responsible deployment and verification remain essential in modern AI adoption.

Who Should Use DeepSeek

DeepSeek is a good fit for:

- Developers and Researchers looking for cost-efficient AI reasoning and code generation.

- Students and Educators needing accessible AI tools for learning and content analysis.

- Small Businesses seeking AI integration without premium subscription costs.

However, users should weigh data privacy implications and consider whether regulated environments require stricter compliance.

Who Should Consider Alternatives

DeepSeek may not be ideal for:

- Strict Enterprise Security Needs: Organizations prioritizing data isolation and local compliance.

- Safety-Sensitive Applications: Scenarios demanding rigorous alignment and content filtering.

- Reliable Uptime Demands: Cases where service availability and guaranteed performance are critical.

In these situations, alternative platforms with established infrastructure and compliance histories, such as those described in Perplexity AI for research-centric workflows, may be more suitable.

Conclusion

DeepSeek is a fascinating example of how quickly the AI landscape is changing. By focusing on reasoning efficiency, long-context understanding, and lower operating costs, it challenges the idea that only massive, closed ecosystems can deliver high-performance AI. For developers, researchers, and curious power users, it offers a compelling blend of openness and capability that makes it genuinely useful for tasks such as coding, research, problem-solving, and long-form content analysis.

However, it is equally important to weigh these strengths against ongoing concerns around privacy, reliability, and regulatory scrutiny, especially for professional or business-critical use cases. DeepSeek is not a universal replacement for tools like ChatGPT, Claude, or Gemini, but rather another powerful option in a growing ecosystem of AI assistants. When used thoughtfully and with proper expectations, it can be a valuable addition to your workflow, and that is why I believe it deserves serious consideration.

FAQs

“Better” depends on your priorities; DeepSeek tends to emphasize cost efficiency and openness, whereas ChatGPT prioritizes safety features and a broad ecosystem of support.

DeepSeek’s combination of MoE architecture, open accessibility, and efficient context handling distinguishes it from many legacy models.

Yes, specific DeepSeek models are optimized for coding tasks and structured problem solving.